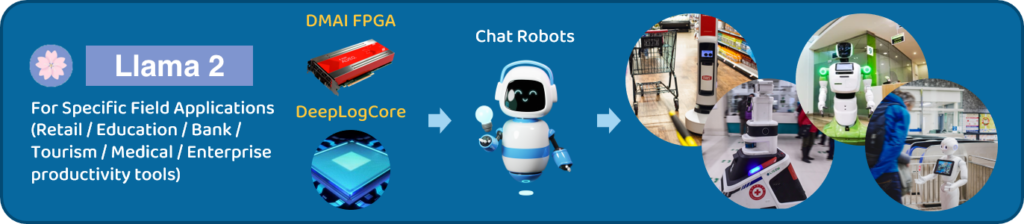

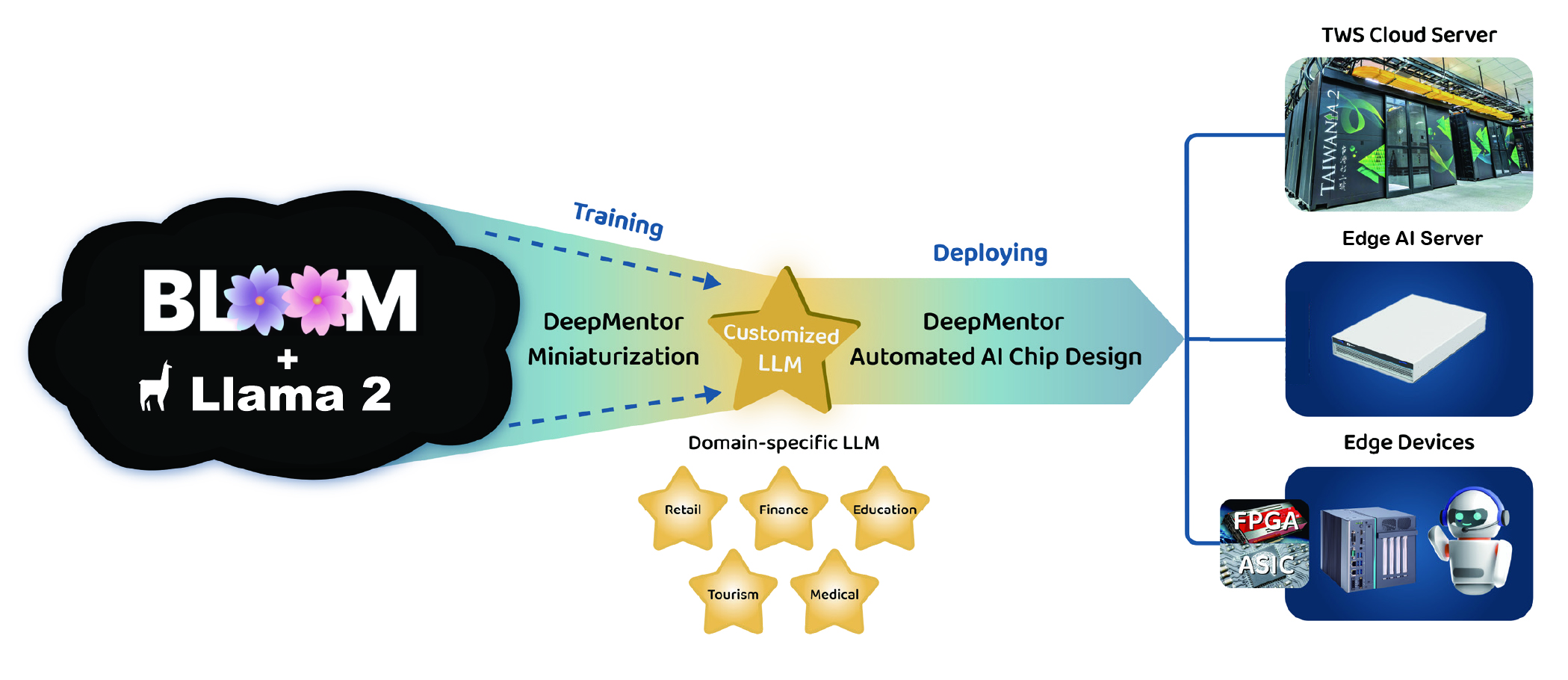

On-premises Solution

Customized LLM

Domain-specific generative conversational AI

We assist clients in launching highly competitive proprietary domain-specific generative conversational AI services or achieving internal AI data-driven training. We offer end-to-end integration services to ensure smooth deployment from the cloud to on-premises environments. Leveraging DeepMentor's exclusive LLM automated training process, we can expedite the launch of your new AI services, allowing you to seize market opportunities.

Greatly Reduce Operational Costs

Standardized Integration of Enterprise Databases

Ensuring data privacy

High Accuracy of Targeted Content

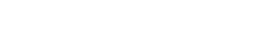

As a result,

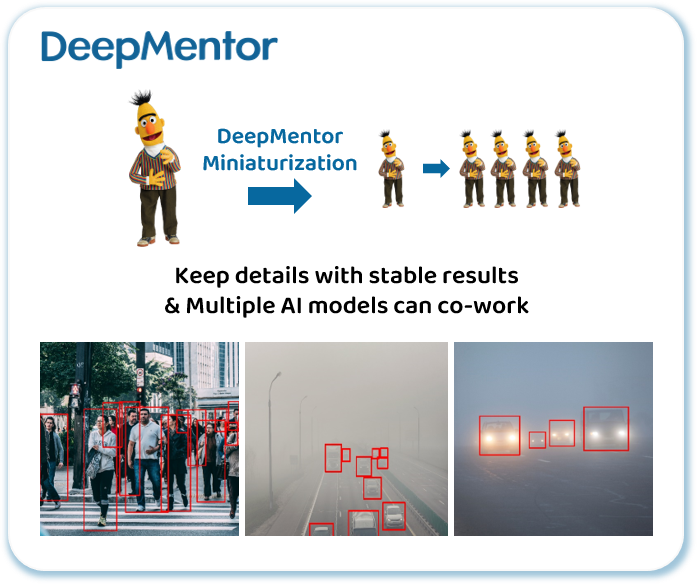

Deepmentor Edge AI can accurately recognize objects from different angles, day & night, and in adverse weather conditions.

Other Edge AI needs to deal with the inaccuracies of small AI algorithms and the cumbersome optimization.

Mentor-300

It boasts a formidable capability to support more than 180 billion LLM parameters.

Meeting Expert

Designed for business, it’s portable, secure, and constantly learning from your meeting content.

Ensure the privacy and security of corporate confidential information & user personal data

Compared to expensive cloud inference costs, on-premise solution can greatly reduce operational costs

real-time synchronization integration of enterprise databases and backend AI service systems

LLM trained for a given task and dataset can be optimized for higher accuracy in generating targeted content

Six functional modules developed exclusively by deepmentor: Document /Customer Service / Code / Image / Meeting / Fine-tune for customization and function optimization

● LLM On-premises Deployment ●